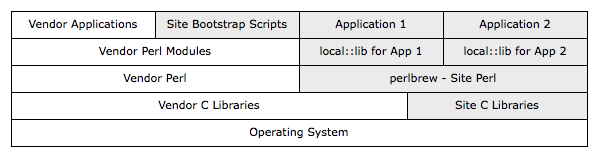

There are many different ways Perl software (and the various supporting C libraries) can be installed onto a system from CPAN. Individual users can get away with installing via ad-hoc methods to their personal systems or testing virtual instances. Larger sites will require standardization on software installation locations and methods, as otherwise time will be wasted as each group forges their own solution. However, any shared solution must be well designed, as otherwise individual groups will find something unsuitable about the method, and create their own. Sites must also decide between various vendor provided options, or whether a custom software depot makes more sense. Custom depots will cost a site more time to maintain—in developing the software, ensuring that security patches are rolled out, educating new users on how to use it—though may provide better integration with other in-house tools, or provide features vendor solutions lack—the exact software version required, not whatever the vendor includes, the ability to install multiple versions of the same software onto a single system.

Different groups at a site may have different needs; for example, a group responsible for central services or the standard system image may only use vendor software, as nothing else is available when bootstrapping new systems. Other groups may then install additional vendor supplied software, or use their own software depot on top of the standard image. Decisions should be made on whether and what third-party software should be mixed into the vendor space, and if so, how conflicts between vendor and custom software versions will be managed, who will manage the custom software, and so forth. Different approaches will suit different users.

< LeoNerd> and personally speaking, I always use apt rather than cpan. Even if

I have to first make the .deb file myself using dh-make-perl. :)

< LeoNerd> Ability to uninstall, upgrade, automatic new version notifications,

etc.... are all highly useful

< infrared> damnit. my distro keeps breaking dbic with their older File::Path

Perl specific tools for software management include App::perlbrew and local::lib. The various vendor ports and package systems usually have some means of converting Perl modules into that vendor system. Ensuring that the system possesses any various required C libraries will need to be handled by some vendor or custom software solution.

Mixing Vendor & Site Installs

Random software installation methods will lead to conflicts and problems. The severity will depend on the system in question, and how badly the software was managed over time. For example, one may install random Perl modules via cpan, and then try to pkg_add other package on OpenBSD, which relies on packages for the same modules from CPAN. This may result in a conflict, as the default CPAN install location is the same as the OpenBSD package install location:

===> Installing p5-Digest-SHA1-2.12 from /usr/ports/packages/amd64/all/

Collision: the following files already exist

/usr/local/libdata/perl5/site_perl/amd64-openbsd/Digest/SHA1.pm (different checksum)

/usr/local/libdata/perl5/site_perl/amd64-openbsd/auto/Digest/SHA1/SHA1.bs (same checksum)

/usr/local/libdata/perl5/site_perl/amd64-openbsd/auto/Digest/SHA1/SHA1.so (different checksum)

/usr/local/man/man3p/Digest::SHA1.3p (different checksum)

*** Error code 1

Time must then be wasted resolving this conflict. The version installed from CPAN may be higher than that installed by the OpenBSD ports system, and required by some other software, which prevents one from simply removing the files and installing the ports version. Worse, the OpenBSD ports may require the older version to work with some other software.

Try to either separate the vendor software from the site software, or to only use the vendor supplied ports or package system to manage software installs:

Software via Network Shares

I avoid sharing software via network shares such as NFS. Sharing can create tight coupling between the various systems and network, such that any network or server failure can cascade to every sharing client, thus increasing the extent of the outage, and likely also increasing the cost and complexity of restoring normal operation. Network shares can also be inappropriate across group or department boundaries, as the different groups may have different funding and availability requirements. Consider a case where clients of a network share operate a critical, availability sensitive application or workflow, while the central group that operates the network share does not have the headcount to maintain the server in a 24x7 environment. In this case, the client systems must not rely on the network share. I use configuration management or vendor packages or rsync or git to ensure all systems have whatever software they require available on a local disk, instead of chaining them to a network share.

Network shares may make sense in very constrained situations, such as when a single group operates a cluster, where all the cluster nodes connect to a primary node via a private network. Should the master node fail, the entire cluster will be rendered unusable, which means it is okay for the cluster nodes to block until the primary node returns. Users of the cluster must be aware of and approve this failure mode, and any software used on the cluster block or otherwise do the right thing when the server is unavailable. Another case would be virtual servers that rely on resources available on their parent server, as should the parent server fail, the virts will likely also be unavailable.